(Enn) Nafnlaus 🇮🇸 🇺🇦

@nafnlaus.bsky.social

The lore in my head is that they organize on social media to commit peoples' deadnames to the blockchain to make them permanent ;)

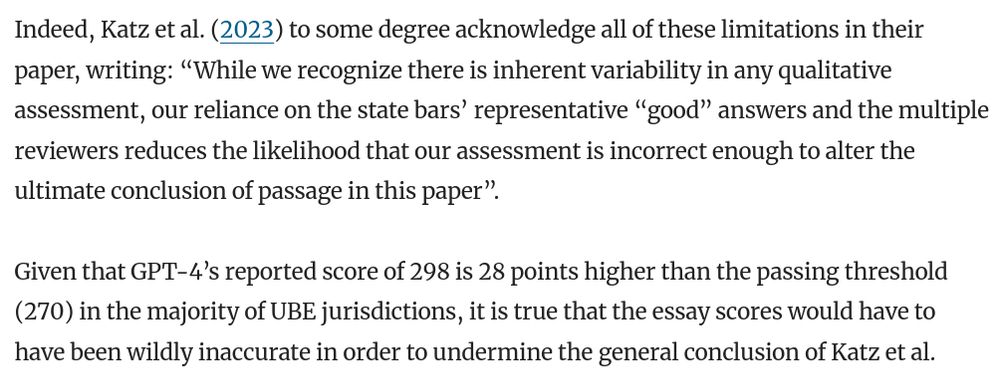

Now I'm picturing transphobes who are really into Bitcoin and making transphobic NFTs. ;)

Lol. Reminds me of when I was trying to generate an oryx on SD 1.5, which you had to include prompts like "Award-winning 8K photograph", and it gave me an oryx branded with "8K" ;)

Exactly what harm are they doing by making pictures of guys they find attractive in their spare time? None. You're just attacking a member of the community, that's all you're doing.

You're condemning a queer person as you speak.

Your beef with AI has nothing to do with pride.

Okay, I have to ask: I've seen a million "women prefer bears" jokes lately. What's this based on? :)

Like, very observant of you I guess? Is this Point Out Peoples' Hobbies day?

It's a ridiculous valuation. Grok is not at all a revolutionary architecture, and is heavily undertrained for its parameter count. Might as well value tiiuae like that for Falcon. :Þ. And "Twitter data" is not worth billions.

A big difference being of course that if your cat wants attention, it'll probably come to you, whereas the parrot may sit on the other side of the room, too lazy to travel to you, and instead calling you by name and demanding that you come to it, until you finally give in ;)

Honestly, parrot and cat personalities aren't all that different ;) Refuse to settle into heirarchies (not "this person is in charge", rather, "this person won TODAY, we'll try again tomorrow"), demand attention on THEIR schedule, can hold grudges & bigotries or get defensive of certain things, etc

It's time to plant the garden :) Also working on a new song. Will need to decide soon whether to use my voice or not.

I have no idea. Like I said, I think "ragebaiting for clicks" is the *best case* scenario here. The worst case is a deliberate desire to push an agenda. :Þ

I jave no idea. Like I said, I think "ragebaiting for clicks" is the *best case* scenario here. The worst case is a deliberate desire to push an agenda. :Þ

(Not that his mother is perfect either, she's a hardcore enabler. Her golden boy can do no wrong)

There's always the nature vs. nurture question. The big diff. between Elon and Kimbal is that Kimbal was older when his parents divorced, understood how terrible of a person his father was, and insisted on living with his mother. Elon by contrast grew up with his father. Becomes more like him daily.

That said, I can't give him too much of a pass, because he does nothing to check Elon's power - and as a board member, he could certainly try. If anyone is going to launch an intervention, I'd expect it to be him and his sister Tosca (an indie director who stays out of the limelight). But no dice.

Seems pretty common. Musk's brother Kimbal for example is an an anti-Trump trans-supportive hippie who's married to a bi woman and tries to use whatever media coverage he gets to encourage people to plant trees.

www.youtube.com/watch?v=csZx...

Elon once unfollowed him over a pro-trans statement.

Kimbal Musk: I'm excited about Plant a Seed Day

FBN's Stuart Varney and 'Special Report' host Bret Baier on the potential challenges facing middle-class tax cuts, Rep. Maxine Waters', (D-Calif.), potential...

www.youtube.comI've said it many times before, but I'm really glad there's people like you working on nuclear safety, rather than people with little imagination about how failures can compound in complex, out-of-the-blue, or just plain stupid ways :)

One might say though, well, why would you add a LLM or LMM into the mix? For something like a greenhouse, sure, there might be no need. But for more complex tasks, where vision or broader knowledge or human action or understanding cause and effect come into the picture, it's what you'd use.

It was just a research project, but it was a large industry-sponsored research project, and I'm sure owners were salivating at those sorts of results.

You'll get the exact same thing in other industries. AIs are, as a general rule, good at optimizing things.

... to far outperform humans. The only problem was that there were certain times when it really cranked the heat up, yet also scheduled labour for those times, which wasn't comfortable for the workers; they said they planned to incorporate worker comfort into layer models.

Basically, they collected a ton of data from sensors (weather, etc) and worker reports about the plants and their yields and quality parameters, and was tasked with minimizing costs (labour, fert, water, power, heat, etc) and maximizing yields times quality. And it used unconventional strategies...

AI is working its way into bloody everything. Like - and this isn't even new - I watched a lecture maybe 1 1/2 years ago as part of my hort work about it being employed to manage greenhouses for tomato cultivation (TL/DR: it did an *amazing* job vs. humans)

Measles also has the nasty ability to cause the body to lose immunity to unrelated diseases it already has immunity to.

"And I replied, 'why do you say, that, son?" And then my wife tapped me on the shoulder and reminded me, we don't have a son. Then the boy's form dissolved as dozens of chattering squirrels abandoned their human suit and swarmed out the window."

It's like looking down on gymnosperm reliance on tracheids rather than vessels. But most of them, not being deciduous, don't *need* fast water conduction because they have no spring rush to regrow lost leaves!

You can't fault an organism for not having something it doesn't need.

I love how they invert our genetic paradigm (haploid most of the time, diploid for reproduction) without going all weird like fungi do. :) Also, their "swimming through rain/dewdrops" fertilization is rather cute. :)

Who needs xylem and phloem when you're specialized to a surface-level niche? :)

And in that regard, it's no different than literally any other technology. No technology yet creates or improves itself. Creation and improvement is, and always has been, on humans.

But that's a far cry from *operation*. They no more need humans to operate them than a traffic light does.

A mechanical turk requires humans to *operate*, not to be developed.

Today's models, in their current state, could be run from now until the sun goes nova without needing humans for anything more that keeping the servers powered up. Human labour only *creates or improves* models. Not operates them

Except that what's the lie is the claim that it was "made up".

THREAD:

Here's the paper that's the source of the reference. Read it.

link.springer.com/article/10.1...

Let's go down the list of the problems with the claims that OpenAI made it up, one by one.

Re-evaluating GPT-4’s bar exam performance - Artificial Intelligence and Law

Perhaps the most widely touted of GPT-4’s at-launch, zero-shot capabilities has been its reported 90th-percentile performance on the Uniform Bar Exam. This paper begins by investigating the methodolog...

link.springer.com

Funny that you stopped there and didn't read further.

Re, the "tailoring" - either scroll up in the article, or see this thread:

bsky.app/profile/nafn...

4) Where did this "48th percentile" number come from? Two things.

4a) Maximally vs. minimally tailored questions:

There are two ways you can ask the AI questions. You can format them up all nice and neat (such as putting quotes around the question), tell it to give ranked choices and proper...

1) The original paper about GPT-4 passing the bar exam was from Daniel Martin Katz, not OpenAI:

royalsocietypublishing.org/doi/10.1098/...

Katz is not an OpenAI employee. He's a Chicago-Kent Professor of Law, and cofounder of 273 Ventures, a legal AI company.

kentlaw.iit.edu/law/faculty-...

GPT-4 passes the bar exam | Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences

In this paper, we experimentally evaluate the zero-shot performance of GPT-4 against

prior generations of GPT on the entire uniform bar examination (UBE), including not

only the multiple-choice multis...

Or run queries on Groq Playground:

console.groq.com/playground?m...

Groq is an extremely fast inference processor. You get pages and pages of responses in second. Exactly what sort of superhumans do you think are behind the curtain able to type that fast?

Just stop. It's embarrassing.

5) Lastly: stop trying to make AI a Mechanical Turk. It's just not happening. You can ***literally download models and run them on your computer***. I run (and even train) models all the time on mine. They work exactly the same if it's net connected or not. There is NOT a person answering.

But that's ***not the same comparison***. Those who pass are already well better than average test takers. Maximally tailored beats 82% of people who are well better than average test takers, and minimally tailored beats 48% of those who are well-better than average test takers.

4b) ***It Comes From Not Comparing The Same Thing***.

Katz's and OpenAI's numbers were listed as percentiles of *all test takers*. But what about *the subset who passed*, aka, those who were *better than average*? Maximally and minimally tailored placed 82nd and 48th percentile, respectively.

First off, with the maximally tailored run for the ranked-choice questions, ***They Exceeded Katz's Numbers***. By six points. They found it placed in the ***95th*** percentile.

The minimally tailored variant by contrast still ranks in the 70th percentile.

So where did this 48% come from?

... authority and citations, give it an explicit template for the desired output,and tell it to respond as if it were taking the bar exam. Maximally tailored. Katz ran this.

OR, you can just punch the question into the prompt with no context at all. Minimally tailored.

This paper ran both.

4) Where did this "48th percentile" number come from? Two things.

4a) Maximally vs. minimally tailored questions:

There are two ways you can ask the AI questions. You can format them up all nice and neat (such as putting quotes around the question), tell it to give ranked choices and proper...

3) Katz himself noted this issue in his original paper, so it's hardly a "gotcha", and it doesn't change the conclusions that ChatGPT would pass the bar exam, as the authors of the new paper agree (they only raise the issue that the essay score "might" not be as high)

www.danielmartinkatz.com

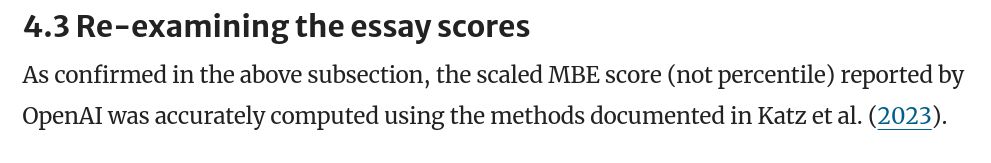

2) The source paper here CONFIRMS that the scaled MBE score was calculated correctly by Katz et al.

It does criticize the rigour of the essay grading, in that no rubrick was used and the graders weren't NCBE trained, but that's just as likely to downplay GPT-4 as upplay it

1) The original paper about GPT-4 passing the bar exam was from Daniel Martin Katz, not OpenAI:

royalsocietypublishing.org/doi/10.1098/...

Katz is not an OpenAI employee. He's a Chicago-Kent Professor of Law, and cofounder of 273 Ventures, a legal AI company.

kentlaw.iit.edu/law/faculty-...

GPT-4 passes the bar exam | Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences

In this paper, we experimentally evaluate the zero-shot performance of GPT-4 against

prior generations of GPT on the entire uniform bar examination (UBE), including not

only the multiple-choice multis...

Except that what's the lie is the claim that it was "made up".

THREAD:

Here's the paper that's the source of the reference. Read it.

link.springer.com/article/10.1...

Let's go down the list of the problems with the claims that OpenAI made it up, one by one.

Re-evaluating GPT-4’s bar exam performance - Artificial Intelligence and Law

Perhaps the most widely touted of GPT-4’s at-launch, zero-shot capabilities has been its reported 90th-percentile performance on the Uniform Bar Exam. This paper begins by investigating the methodolog...

link.springer.com