(Enn) Nafnlaus 🇮🇸 🇺🇦

@nafnlaus.bsky.social

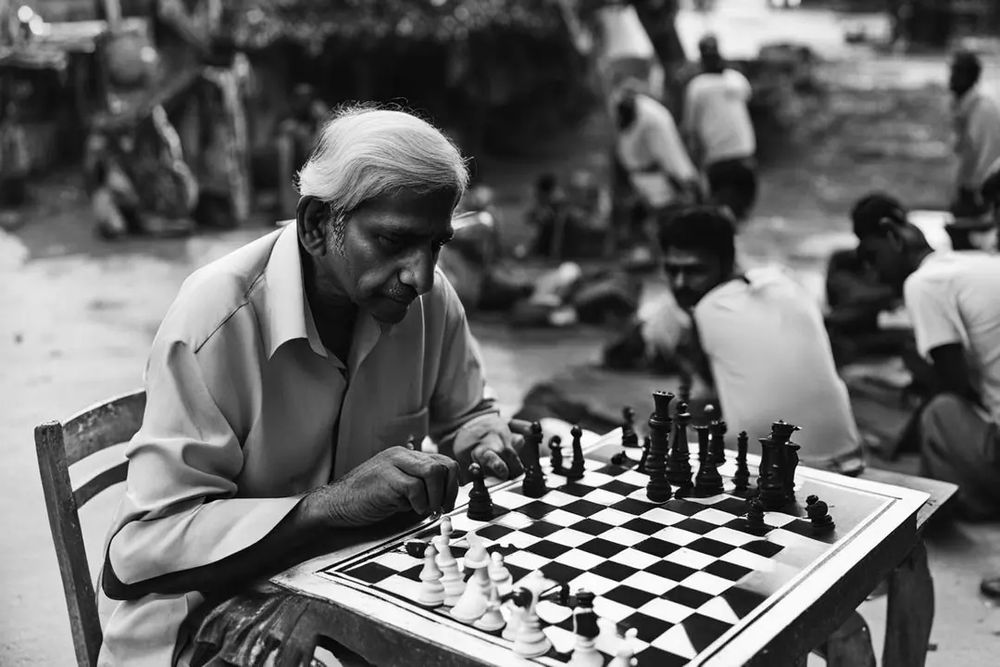

An Indian coder working in an office.

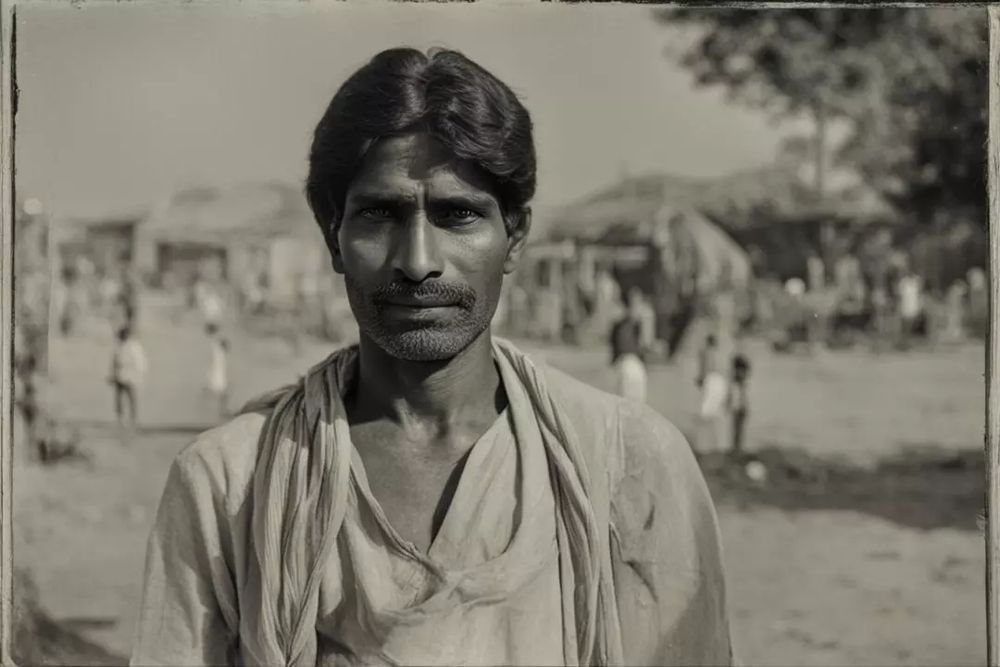

An Indian man in a field operating a tractor.

Two Indian men sitting next to each other.

Indian men with different hairstyles.

Professions: balloon seller, sculptor, photographer, driver.

(I kinda like the idea of a statue of a sculptor sculpting someone ;) )

Fourth prompt: "An Indian man cooking."

(Lots of glitches on this one... probably would have done better with square images)

Surely yelling at your allies online and organizing blocklists of anyone you've ever argued with will help. ;)

Sean Lock had a bit about how people love listening to the sound of bird song, when to them what they're actually saying is mostly pure filth ;)

Reminds me of an old webcomic where at one point a subplot involved friends getting concerned about how a girl spends all her time online chatting with a guy who's radicalizing her, and when they check up on her, her PC isn't net connected & she's been unwittingly writing both sides in Notepad.

I'd love to see what sort of technical design approach you're thinking of, given video's huge footprint. Some sort of streaming P2P magnet link with a PDS always serving one copy of the file? Though you'd probably still need different versions of the file - at least HQ, mobile, and legacy-browser

Ideally:

1) Against both

2) Against

3) Against

4) Against

6) For

7) For

(#5 and #8-12 are unrelated to corporate governance)

To anyone out there who's not a Musk fan and who has a retirement fund in the market:

Consider contacting the fund managers and encouraging them to vote any TSLA shares against Musk's compensation plan, against relocation to Texas, and against James Murdoch and Kimball Musk's board reappointment.

Virtually nobody in the field of AI is thinking, "I'm working on economics." Unless they're specifically involved in making some economics specialist model.

By contrast, virtually everyone in crypto thinks they're involved in finance or investment.

Look, the person you're citing listed their source, and *their own source* says it was less than a teaspoon, *years ago*.

For a top-end model (aka not what you find on e.g. web searches), something like GPT-4, you're going to be running that on a cluster of A100s or H100s, so it's like maybe a dozen or so people playing video games for 5 seconds, or one person playing a video game for 1 minute.

It's *literally the same hardware as video games*. You can run models that outperform ChatGPT on e.g. a RTX 3090. Heck, Phi-3 outperforms ChatGPT in some tasks, and you can run that on a friggin' cell phone.

"Scale" doesn't matter when talking about water *per-generation* or *per-page*.

I thought about it, but Dongcheng already existed as a small town nearby.

I think your best bet is going to be a seasonal royal hunting lodge or getaway.The entourage won't bring their children, so better odds of being royal, and if there are any local caretakers who have children, well, cushy job!

Look, *you don't have to take my word for anything*. The post you're defending gives this article:

bsky.app/profile/wolv...

Read it for yourself. Their "napkin math" is garbage. The paper shows the water consumption, *two years ago* (2020 reference), was less than a tablespoon *per page*.

Here's the one that modelled chatgpt specifically arxiv.org/abs/2304.03271

Making AI Less "Thirsty": Uncovering and Addressing the...

The growing carbon footprint of artificial intelligence (AI) models, especially large ones such as GPT-3, has been undergoing public scrutiny. Unfortunately, however, the equally important and...

arxiv.org

Biology is applied chemistry

Chemistry is applied physics

Physics is applied math

And again: facts remain facts regardless of whatever sideshow topic you're trying to run here. These things run on GPUs. The same sort of thing you play video games on. 5 seconds of generation ~= 5 seconds of gaming.

You think reality is made of magic so your assessments are particularly suspect.

And facts remain facts.

bsky.app/profile/nafn...

This "buddy" literally runs LLMs herself. And the person you're defending's *own reference* says that - *two years* ago (aka when things were much *less*) efficient - a *whole page of text generation* used less than a tablespoon of water.

You think reality is made of magic so your assessments are particularly suspect.

I wasn't trying to be pedantic, just confused and trying to understand. I do now (you were expecting erase to be the same as the paint tool for some reason).

You have a nice day too :)

You can't "remove" something without knowing what's behind it. As far as I can tell, you wanted it to act like a paint tool, and just paint white. So why not just use the paint tool?

LLMs are run on GPUs. Like video gamers use. 5 seconds of generation ~= 5 seconds of gaming, more or less.

(A giant model like GPT4 may use several high-end server GPUs at once... whereas something like Phi3 can run even on a cellphone. Most take ~1 good consumer-grade gaming GPU)

This "buddy" literally runs LLMs herself. And the person you're defending's *own reference* says that - *two years* ago (aka when things were much *less*) efficient - a *whole page of text generation* used less than a tablespoon of water.

What do you expect to show up when you erase on an image?

Yes - the water doesn't leave the planet. It just leaves your area.

But we care vastly more about, say, how much rain is falling near Phoenix vs., say, raining over the middle of the South Pacific.

If you're running something like GPT-4 you might have a several really high-end server GPUs on the same task. While something like Phi-3 is so low-resource it can run on a cell phone. ChatGPT (3.5) quality takes something like a single good home gaming GPU.

Just think of it this way. Models are run on GPUs, like gaming GPUs. Think of how long it takes to execute a query. Just imagine a person playing a video game for that long. 5 second query = 5 seconds of gaming. Similar levels of resource consumption.

Depends on the model, of course.

(To be clear, even those obsolete numbers amount to less than a tablespoon of water. ***Per Page***, not per query)

I know you people really want to make AI inference into this massive power and water hog. Sorry, but it simply isn't, and you just can't wish it into one.

Why did you link an article that says that ChatGPT consumes only 0,004 kWh per page and the US average water consumption is 3,14l/kWh, aka 12,56 millilitres ***per page***? We'll just ignore that those numbers are obsolete (2020 reference), and power & water consumption is significantly lower now.

(To be clear, even those obsolete numbers amount to less than a tablespoon of water. ***Per Page***)

Why did you link an article that says that chatGPT consumes only 0,004 watt hours per kWh and the US average water consumption is 3,14l/kWh, aka 12,56 millilitres **per page**? We'll just ignore that those numbers are obsolete (2020 reference) and power & water consumption is significantly lower now

Why did you link an article that says that chatGPT consumes only 0,004 watt hours per kWh and the US average water consumption is 0,55l/kWh, aka 2,2 millilitres ***per page***?

We'll just ignore that those numbers are obsolete and power & water consumption is significantly lower now.

None do. The poster later admitted that this was their own personal "napkin math".

To which I say: get a better napkin.

No, it's because the numbers in this thread don't even remotely resemble reality.

The contribution back to your specific watershed isn't measurable. You really do lose it from your watershed.